Your AI tools don't know anything about your company

Every team is rolling out Claude, Cursor, and Copilot. None of them know what your company has decided, written, or argued about. So I built the memory layer that does.

on this page9

Every company on earth is rolling out AI tools right now.

Claude. Cursor. Copilot. ChatGPT Enterprise. The procurement budget is real. The Slack channels are full of people sharing prompts. And yet, the moment you actually try to use these tools for work that matters, you hit the same wall.

The model doesn't know anything about your company.

It doesn't know which database you picked and why. It doesn't know that Bob spiked Y.js six months ago and ruled out Automerge for a specific reason. It doesn't know that Project Polaris was renamed twice and is now scoped to ship in a week. It will happily make up an answer instead.

That's the gap I'm closing with Cognia.

How it started

Cognia started as something selfish.

I read a lot. Articles, papers, GitHub issues, half-finished blog posts at 1 AM. I'd find something useful, lose it, and re-google it three weeks later. I built a browser extension that quietly captured what I read, summarized it, and made it searchable. Then I started visualizing it as a graph because lists felt wrong for the way knowledge actually connects.

That worked. Then I tried using it with my friends, and it broke.

A team's memory isn't just web pages. It's Slack threads. It's a Notion doc someone wrote and forgot. It's a draft PR that's 60% done. It's a benchmark file in a folder no one opens. It's a decision Sarah made before she left, that the new engineer is about to relitigate from scratch.

So Cognia grew up. It's now an org-scoped memory layer that captures all of it, connects it, and serves it back when someone needs it.

What "memory" actually means here

A memory in Cognia is not a raw blob of text. That's the part most people get wrong when they say "RAG."

When something gets captured (a page, a doc, a Slack message, a PDF), the system runs it through a pipeline:

- canonicalize and hash it so duplicates collapse

- extract topics, entities, categories, and a structured summary

- score importance and confidence

- embed it for semantic retrieval

- link it to related memories through shared topics, time, and meaning

By the time it's stored, it's not text — it's a retrieval-grade object that knows what it's about and what it relates to. That's the difference between a search engine that returns "documents that contain the word sharding" and one that can answer "catch me up on the sharding work" with the right four sources, in order, with citations.

Capture, without making capture a chore

If memory only works when humans remember to write things down, it doesn't work.

Cognia captures from the surfaces people already use:

- a browser extension that quietly stores meaningful pages as you browse (no, it doesn't capture localhost or your bank tabs)

- a web app for typing things in directly

- document upload, chunked at the section level so citations point to the exact paragraph

- integrations with Drive, Notion, Slack, and Box, with encrypted token storage and webhook-driven sync

The point is that you don't have to think about it. You read, you write, you work — and your team's memory keeps growing in the background.

The Memory Mesh

I cannot stand knowledge bases that show you a list of folders.

Knowledge isn't a folder. It's a network. The Notion doc about Y.js connects to the Slack thread that started the debate, which connects to the benchmark file Bob ran, which connects to the patch that's still living in your fork.

The Memory Mesh is Cognia's 3D visualization of all of that. Nodes are memories. Edges are relationships — semantic, topical, temporal. You can spin it, cluster it, click into a node and trace why it was connected to its neighbors.

It's not a UI flourish. It's the product saying out loud: this is how your team's knowledge actually fits together. The flat list was always a lie.

Search that gives you an answer, not ten blue links

Type a question. Get an answer with citations.

Under the hood, the retrieval stack is doing more than a vector lookup:

- hybrid search blending semantic similarity (Qdrant) with keyword matching

- query classification to pick the right policy

- a reranker that pushes the actually-relevant stuff to the top

- AI answer generation grounded in the retrieved memories, with inline

[1],[2]citations - streaming responses so you don't sit there watching a spinner

Try this query in the live demo: "I just joined and need to ship Project Polaris in 1 week — what do I need to know?"

It comes back with the project status, the open blockers, the decisions that have already been made, and the people who made them. Cited. Eight seconds. Without Cognia, that's a week of catch-up calls.

The same memory, inside the AI tools you already use

This is the part I'm most excited about.

Cognia exposes its memory through an MCP server. That means Claude Code, Cursor, and any other MCP-aware tool can call into your team's memory directly. You stay in the editor. You ask "brief me on POLARIS-23 before I start writing code" and the model answers grounded in real Slack threads, real PRs, real decisions.

The whole pitch of "your AI tools don't know your company" collapses the second the model can actually reach into your company's memory at tool-call time.

The browser extension does a softer version of the same trick — it can inject relevant Cognia context into ChatGPT or Claude.ai prompts as you type, and draft email replies in Gmail and Outlook from the surrounding thread. Same memory. Different surface.

Built like a workspace, not a toy

I didn't want to build a cute demo that falls apart the moment a real team uses it. So the boring stuff is in there too:

- email/password auth with JWT, plus session cookies and rate-limited login

- per-user 2FA, with org-level enforcement if you want it

- session timeout policies and IP allowlists at the org level

- audit logs

- encrypted integration tokens

- BYOK for LLM providers

- a platform API with matter-aware search modes (

matterId,clientId,privileged,securityTags) for legal and regulated workflows

None of this is glamorous. But "we'd love to use you, except for the SSO question" is how good products die at the procurement stage.

Why I built this

Every team I've talked to has a 2026 mandate to ship AI internally. They've all hit the same wall: their data isn't ready. The AI is fine. The retrieval layer is missing.

Notion AI searches Notion. Glean searches your SaaS. Neither of them touches the open web your team actually reads. None of them flow back into the editor where engineers actually work. None of them treat personal context and team context as one thing.

That's the gap. Cognia fills it.

I'm a solo founder building this because I want to use it. The fact that other teams want to use it too is the part I'm still adjusting to.

Try it

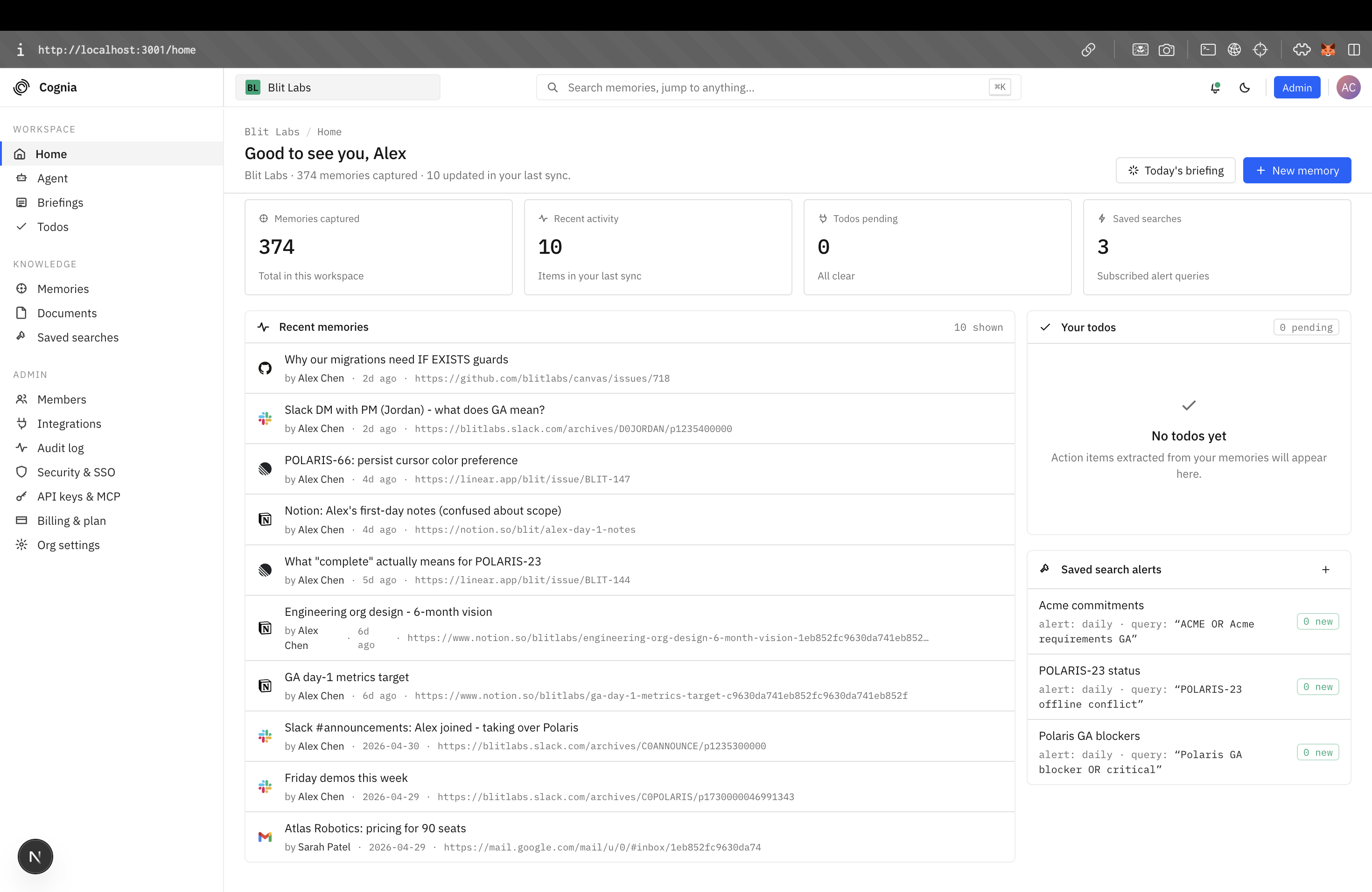

Cognia is live at cogniahq.tech.

There's a seeded "Blit Labs" workspace with ~370 memories spanning a realistic team narrative — handover docs, customer threads, hiring decisions, engineering debates. Sign in as one of the demo accounts and ask it something hard. Watch the citations populate. Click through to the source. See if it actually knows what your AI tools should have known all along.